In a new section of the Intechnica blog, titled “Performance Nightmares”, we’re going to take a closer look at some of the most notorious, high-profile, brand-damaging website performance failures in the history of the internet. Now, the internet is a huge place, and every day, all sorts of websites struggle with performance issues of all kinds; not just the big websites, but also many smaller sites. A quick search on Twitter for “slow website” or “website down” shows the scope of this.

https://twitter.com/lukey868/status/191857279111405568 https://twitter.com/Frewps/status/191704594269736961 Of course, the cause of each of these problems could be all sorts of things, but these comments are entirely public, and potentially hundreds or even thousands of people could read these negative tweets. But bad publicity is not the only negative effect of slow or failing websites,

as a previous post on this blog tells. The business impact can be very damaging too. So to kick off “Performance Nightmares”, let’s jump right in with 15 examples spanning from across the last 11 years. I’ve split them into four categories;

Marketing Oversights,

Overwhelming Public Interest,

Unforeseeable Events and

Technical Hiccups. Be sure to check back (or

subscribe) to the blog to see more

Performance Nightmares as they are reported!

Marketing Oversights

The goal of any marketing campaign is to generate awareness and interest in a brand or product, and drive customers to find out more, ultimately converting leads to sales. In the past, the more interest you directed towards your product the better, as long as supply met demand. But in the following cases, so much more demand was created than expected that the websites fell down, locking out potential customers (not to mention regular and existing customers). While the companies often say that “it’s a good problem to have” or “we were too successful for our own good”, the under-performing website has shot them in the foot, and customers old and new end up frustrated. Here are a few high profile examples…

1. Nectar

When directing people to a website for a special offer, it’s worth checking they will all be able to access it

Setting the scene: When it launched in 2002, loyalty card scheme Nectar pushed hard with TV adverts and email marketing directed at over 10 million households, driving people to their newly launched service. While they had phone lines and direct mail as means of collecting registrations, Nectar attempted to save costs by offering a rewards incentive to people registering online via the website.

Performance Nightmare: While Nectar prepared for this by increasing their server capacity six-fold, a peak of 10,000 visitors in one hour was enough to bring the site down for three days. Nectar cited the complexity of the registration process (security & encryption) as a bottleneck.

Source

2. Glastonbury Festival

Website crashes causes people to express real disappointment

Glastonbury: Messy business

Setting the scene: As an iconic music festival of the past 42 years, Glastonbury needs no introduction. In fact, its fame and popularity on the global music calendar has been almost a gift as well as a curse for some time; plagued by demand greatly outstripping availability of tickets, £2 million was spent in 2002 to build a giant fence, which kept out people who did not have a ticket, and festival goers long complained of having to pay inflated prices to ticket touts online. The problem was compounded in 2005 when information leaked about acts like Oasis and Paul McCartney being set to headline.

Performance Nightmare: The ticketing website got two million impressions in the first five minutes, overloading the system and resulting in disappointment for many people seeking tickets. Bad news spreads fast, with the BBC being flooded with emails about the service, and reports of people selling t-shirts displaying the error message shown by the website.

Source

3. Dr Pepper

Offer a free drink to 300 million people… what’s the worst that can happen?

Setting the scene: This is almost the poster boy for a marketing campaign that did not take the limits of a website’s performance into account. Dr Pepper promised that, if Guns n’ Roses released the “Chinese Democracy” album in 2008, they would give everyone in the US a free Dr Pepper. When the album was released, Dr Pepper made good on their offer… limiting it to just one day. There are 300 million people in the United States. I think you can see where this is going.

Performance Nightmare: If you guessed that the site was overwhelmed and crashed, you’d be right. The traffic spiked dramatically, forcing Dr Pepper to add more server capacity and extend the offer by a day… which probably would have been a good idea in the first place.

Source

4. Reiss

The gift of free publicity can be a double-edged sword for web performance

Setting the scene: The internet has had a massive effect on the fashion industry, from e-commerce retail through to trend setting and social media. Since her engagement and the globally covered event of her marriage to Prince William, Kate Middleton has become a fashion icon. And when you have an event as watched by the world as the first meeting of the US Presidential first family with “Kate & Wills”, in May 2011, every fashion-hunter had their eye on what the duchess chose to wear. This was great publicity for the designer in question, Reiss…

Performance Nightmare: … Until the sheer volume of interest crashed their website for two and a half hours. While it probably wasn’t a formal marketing campaign as such, the exposure of the brand via Kate Middleton meeting the Obamas is a major fashion event for many, and such a high profile endorsement draws more traffic than perhaps any traditional marketing campaign, something the website was unable to cope with.

Source

5. Ticketmaster, Ticketline, See Tickets, The Ticket Factory

Some events are in such high demand, they can take out multiple sites in one day

Setting the scene: Take That were one of the biggest boy bands of the 90’s, and when they returned for a nationwide tour, women in their mid 20s would trample over their father to get tickets. When the band announced a huge tour with the full line up, including Robbie Williams, the response was frenzied among fans. Tickets were stocked by many major websites, including Ticketmaster, Ticketline, See Tickets and the Ticket Factory.

Performance Disaster: The demand was so high for tickets that fans flooded and crashed all four sites mentioned above. Would-be ticket buyers were forced to wait, in some cases all day, for their order to be processed successfully (if they could get on the sites at all), and the slow running of the websites continued even after the tickets were all gone. Considering the popularity of Take That, it should be no surprise that this negative experience was widely shared and reported on in the mainstream media. Maybe those Take That fans should have had a little patience.

Source

6. Paddy Power

You think your website will perform when it matters… Wanna bet?

Don’t let your site fall at the first hurdle

Setting the scene: The Grand National is the biggest betting event of the year in the UK, with it actually being the only betting event of the year for many. Business is at its peak for bookies and betting websites, with the British public spending £80 million in bets on each year’s Grand National. With fierce competition between betting websites to get the business of both regular and once-a-year betters, many offer special deals, such as Paddy Power’s “Five Places” payout offer.

Performance Disaster: So great was the demand for bets on Paddy Power, higher than any other day in its history in fact, that the website came crashing down more minutes before the race. The site was down just 20 minutes, coming back up 15 minutes before the race, but in such a short time frame, this was a costly hiccup where there are many other betting websites to choose from. Studies show that, at busy times, 75% of customers will move onto a competitor’s website rather than suffer delays. This has to be compounded with such a time-sensitive case as getting good odds on the Grand National. 88% of people won’t come back to a website after a bad experience; Paddy Power have since offered a free bet to all its customers as damage control.

Source

Overwhelming Public Interest

Clearly there is a costly disconnect between marketing efforts and website performance considerations, but sometimes simple public interest in a product or service can back a website into a corner. Government and public service websites are more and more becoming essential resources for the general public, and especially at service launches or at times of peak interest, these key web applications need to be able to scale – but sometimes don’t…

7. Swine Flu Pandemic

Curiosity killed this website of high public interest

Setting the scene: Back in July 2009, the UK was caught up in the supposed “Swine Flu Pandemic”. Some reports went as far as to say that up to 60,000 could die of swine flu. To help ease the strain on the medical sector, the government decided to launch a website with a check list of the symptoms of swine flu, giving appropriate advice to those who had them, while putting those without at ease.

Performance Nightmare: With media scaremongering at a high, the website received 2,600 hits per second, or 9.3 million hits per hour, just two hours after launching. Unsurprisingly, the website crashed temporarily, although it was quickly restored; this was put down to most people visiting out of “curiosity” and quickly leaving the site after deciding on their diagnosis.

Source

8. UK Police Crime Maps

Even with higher than expected demand, websites can still be guilty of not being built to scale

Setting the scene: In a move to increase transparency in crime statistics, the UK government launched a website in February 2011 allowing members of the public to get access to information about crime rates in their areas via markers on an interactive map. This received mainstream news coverage, with questions being raised about the accuracy & impact of the reports; for example, the affect on insurance rates or house prices.

Performance Nightmare: The crime maps were of such great public interest that it received 18 million hits an hour on its first day, bringing it tumbling down within a few hours in a very public fashion. While it might seem reasonable for a website to fall down under 18 million hits an hour, it was clear that the site simply wasn’t designed to perform at any kind of scale, despite using Amazon EC2 machines to spin up extra capacity; “you still need to build a site that scales without needing 1000′s of servers”.

Source

9. Census (1901 UK & 1940 USA)

Learn from other people’s mistakes, and make sure you can scale up enough

Data entry for the 1940 census web archive… sort of

Setting the scene: Although separated by 39 years and the Atlantic Ocean, these two census reports caused a very similar problem to their respective websites. As the census information came into the public domain (in 2002 for the UK, 2012 for the States), each government commissioned websites to host the historical data, which was placed into databases and images scanned in for downloading. Both sites expected a high level of interest and part of their remit was to cope with the high level of load (the US census was expected to support 10 million hits a day, while the UK census was required to cater for 1.2 million users per day).

Performance Nightmare: Demand for each service was so overwhelming that it exceeded both predefined targets set to the websites, bringing them down within hours. To start with the 2002 failure of the 1901 UK census, the website hit its 1.2 million hit limit within just 3 hours, and the site was closed in an attempt to investigate means to make it scale. It was closed in January and eventually reopened in August, with full functionality being restored in November. Ten years later, the US government apparently didn’t heed this lesson in scaling a census website, as the site hit 22.5 million hits within 3 hours of launching. Again, despite being hosted in Amazon’s AWS cloud, the site didn’t scale to meet the demand, and the site was forced to restrict its functionality when it came back online the next day.

Source

10. London 2012 Olympics

Web performance can be more like a marathon than a sprint

Setting the scene: The Olympics is often a cause of controversy, in the sheer level of interest it generates. Cities all over the world clamour to host the global event, as it draws in tourism and revenue, but with that comes social, economical and logistical challenges, as even advanced cities prepare to welcome a sudden spike in the population.

Performance Nightmare: There is almost too much to write about this one. In April 2011, a window to buy 6.6 million tickets through a public ballot came to an end; as it was not a first-come, first-served basis, many people waited until the last minute to decide on what tickets to bid on. The website was slowed to a crawl late on the last day under heavy load, forcing the six-week window to be extended by several hours. A few months later, the Olympics ticket resale site opened, allowing people to buy and sell official tickets with each other, but this also failed to cope with the strain of demand, slowing to a crawl. More problems arose in December and January, with more ticket website outages and cases of events being oversold.

Source

11. UCAS

Increasing adoption of the internet in general can impact your service performance

Setting the scene: Compared to post or call centres, the internet is a cost-effective way to collect information from lots of people at once, and with more people having access than ever to internet services, it makes sense to expect the public to use them. Indeed, UCAS now uses their website to allow students to book places on courses with vacant places in the clearing stage of University applications.

Performance Nightmare: In 2011 185,000 students were chasing just 29,000 unfilled course places. The number of hopeful students logging into the UCAS clearing site quadrupled from the previous year. UCAS were forced to shut down the site for over an hour to cope with the volume of traffic coming into the site, as students were dependant on the service to find out the status of their applications.

Source

12. Floodline

Sometimes it’s not the volume of traffic, but what the traffic is doing that causes problems

Disclaimer: Not a realistic danger of a flooded website

Setting the scene: The UK Environment Agency’s National Floodline was set up in 2002 to provide instant information via call centre or over web about potential flood dangers across the UK. However, heavy rainfall over the Christmas and New Year of 2002/2003 caused a surge of activity at both channels.

Performance Nightmare: The sudden demand and searches for information made the website suddenly unavailable for many. As the risk of flooding rose, phone enquiries climbed to a peak of 32,650 calls a day, and as people failed to get through, many turned to the web site where they would execute complicated searches in order to establish the impact of flooding in their area. At the peak, on 2 January, 23,350 people were hitting the site, and while the site was built to support a high number of users (and had successfully done so in the past), it was the complexity of the searches than was the main cause of bottlenecks. As the Environment minister told a parliamentary committee, the web site crash (which took the site out for several days) was not helped by the fact that so many people were at home over that period “and had little else to do except surf the net and look for flood information”.

Source

Technical Hiccups

While website performance problems are only brought to light when the site in question needs to perform well more than ever, and site owners find themselves “victims of their own success” when a marketing push or genuine public interest flood their website, there are also times where a glitch or error can bring on a Performance Nightmare. From hardware failure through to human error, such instances have proven to cause serious problems ranging from bad PR through to legal woes, and of course have cost their victims a lot of money.

13. Tesco

Customers needing a service won’t hesitate to go elsewhere

Setting the scene: Online grocery site Tesco.com is a service used to order shopping for home delivery in the UK. Many people use it for their weekly grocery shop, as part of a busy lifestyle or perhaps through being unable to physically get to and from a supermarket. Tesco makes an estimated £255 million a year through online sales.

Performance Nightmare: In September 2011, the Tesco online service was halted for 2 hours by “technical glitches”. Disgruntled customers, who in some cases depend on getting specific delivery slots, were quick to go elsewhere with their custom, as many other UK supermarkets now offer an online delivery service.

Source

14. TD Waterhouse

A case of the financial impact being all too apparent

Setting the scene: TD Waterhouse, now known in the US as Ameritrade and elsewhere as TD Direct Investing, is an individual investment services company. Customers use its online service to order stocks and shares. As of 2001, it was the second largest discount broker in the US.

Performance Nightmare: The stock broker’s website suffered significant outages, which prevented customer orders from being processed on 33 different trade days spanning from November 1997 through to April 2000. The outages lasted up to 1 hour 51 minutes. This, along with TD Waterhouse’s failure to advise customers about alternative order methods, plus a general lack of customer service around the matter, caused the New York Stock Exchange to fine TD Waterhouse $225,000. The company put the outages down to “software issues”. The Securities and Exchange Commission released a report in January 2001 calling on brokerage firms to improve areas such as performance.

Source

15. JP Morgan Chase

Communication and prompt action are key when customers suffer from a web performance failure

Setting the scene: American bank JP Morgan chase, which as of 2010 had $2 trillion in assets, provides an online banking services for its customers to manage their accounts and make transactions.

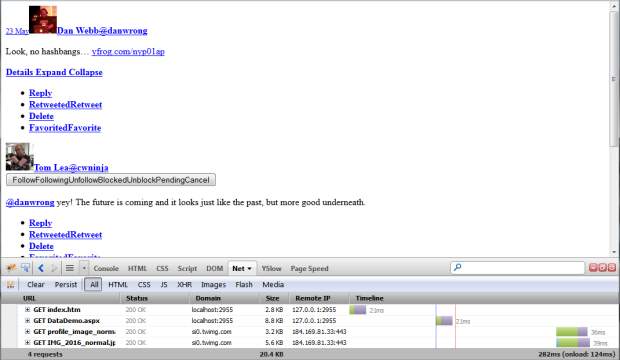

Performance Nightmare: On 14th September 2010, Chase bank’s online service went down “sometime overnight”, causing inconvenience for customers, who took to Twitter to vent. One user was quoted as tweeting “Dear Chase Bank, I have about 10 million expense reports to do, please get your act together so I can see my transactions online!” While occasional online bank outages aren’t rare, in this case the outage lasted around 18 hours.

Source

Got a contribution to the list? Leave it in the comments below!

Want to avoid a web performance nightmare of your own? Check out Intechnica’s Event Performance Management service!